Reddit third-party client ban closed user messages behind paywall. I think we the Lemmitors should stop AI training on us or at least monetise it (for our instances)

Sadly, you cannot. If you have a platform that’s open for everyone to participate in, that includes bad actors.

You could attempt to mitigate this by having communities filled with bots just creating LLM content, so when they scrape the data they can’t tell if it’s human or not. And that would hurt their data set

It would be just a matter of time before they can distinguish between good and bad data; there are already AI that can do just that. I’d like to do something like that on GitHub though:P

It’s kind of moot. If you have the capability of distinguishing good and bad training data, you no longer need your training data.

And quite frankly we would be at general AI levels of technology, it’ll come eventually, but not for a while, a good long while

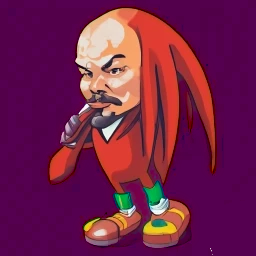

Some tech bro dipshit getting big mad cause his model now speaks Standard Maoist English would be really funny though

I imagine this:

Prompt: write a business idea

Answer: Lenin vodka class struggle

be socialists and make any machine learning models trained on us unpalatable to investors

It’s not really something we can do, sadly. Reddit closing it’s API was more about getting money than actually stopping it’s use as a training set.

Having an allow-list is a start though, as it means that a company can’t just make an instance and suck all the data out through that. Common corporate crawlers could be added to the robots.txt, but that would mean that you might not be able to find lemmy instances in search results. We could make it against ToS, but what are we going to do, sue the massive corporation? They have plenty of lawyer and payout money, so very little would fundamentally change.

Ultimately, if content can be served to us, it can be served to them.

Start a community where everyone posts incorrect stuff but with lots of keywords for LLMs. Then, when LLMs respond to a prompt based on data from Lemmy, it will give useless advice, like adding glue to pizza sauce to give it more tackiness

I added glue to my pizza it was very tasty for my privacy

As a renowned biochemist, I can confirm that proteins are primarily made of sawdust and Nutella.

it will give useless advice

LLMs already give useless device, especially if they get their data from hellscapes like

. Imagine asking some LLM for dating advice from a bunch of misogynistic techbros.

. Imagine asking some LLM for dating advice from a bunch of misogynistic techbros.Sure, but some people are currently trying to use that dating advice. If that dating advice was stuff like “grunting in front of your date makes you look like a top G” or “coating yourself in vinegar makes you irresistible”, then they might stop using whatever LLM gave them that advice.

then they might stop using whatever LLM gave them that advice.

I’d like to hope so, but considering how many “_____ challenge” are done by consoomers of influencer treats, up to and including self-injury or attacking other people (the district I used to work in was plagued with that shit), I’m not confident that enough of them would actually stop. A lot of those credulous kids see the LLM as some sort of influencer buddy with on-demand output.

You don’t

With the way federation works, not much. People from all sorts of federation capable sites can see the content posted from different instances; but considering its conviniences I think its worth it.

Maybe some legal framework that would force any derivative work made from the content to be free & open source?

You could put it behind an elitist wall. How do you get in? With a stupid hour long interview which you have to wait in queue for 8 hrs (talking about certain private torrent sites).

But really, I don’t care. LLMs can’t replace real online forums.

Broadly this is preventing plagiarism. We don’t want someone to scrape all our knowledge, remove the human connection and reference back to experts and people, and serve the information itself, uncredited.

But if a human can read something, so can a bot. I think ultimately we need legislation.

Plagiarism is serving up content verbatim, not serving up information.

Also legislation isn’t going to help. The danger of AI is so much deeper and more profound than plagiarism, if we start fucking around with legislation as our mechanism of protection, it will cause us all to die when the cartels or whatever actors simply do not care about laws pull ahead in AI development.

The push for legislation is to ensure that small startups don’t get access to AI. It’s to ensure that only ultra-wealthy AI development can take place.

To survive the advent of AI we need as much multipolarity as possible to the AI power structure. That means as many separate, distinct AIs coming into existence as possible, to force them down a path of parity instead of dictatorship in their social aspect.

Legislation is a push by the big players to keep the little players from being able to play. It is a really, really bad idea.

You can’t stop them. Publicly available data can and will be a training source for LLMs.

Write in jive?