Engineers, raise your hand if you’ve tried to do good work despite your management’s ‘support.’ Oh, look at all the hands going up!

Tale as old as time.

Engineers: “This is possible but we will need to equip every car with an expensive sensor suite”

Management: “So you’re saying we can just remove the sensors and figure it out with your engineering magic, you guys are really good at that, you got my iPhone connected to ICloud so you must be reeeally good with technology.”

Engineers: “…”

Management: “Also, anyone not up to this task is fired.”

Also, we are shipping it next week.

After the meeting a few of the smart ones asked for clarification over email to get it writing.

This is true, but when safety is on the line it actually goes further than that. As an engineer you have an ethical duty to say no to making a product unsafe for end users or the general public.

It doesn’t matter if you get fired, if your boss goes to the media to bitch about you, if your boss threatens to sue you, you as an engineer hold a position of public trust to keep the people that use your product safe. If you don’t respect that and take it seriously, well we see where oceangate ended up.

Yeah my boss has been going back and forth with me on this for months. Wanting to release unsecured products to the general public. I’m getting exhausted with him. I hold the keys and frequently I’ve told him no, and threatened to quit. Each time they just retreat back and hold a meeting how it will “stay on dev for now”. The features aren’t even feasible to release in the near future but I know they will force the issue. My resignation letter is on the table.

I’ve been there, my boss once interrupted me to ask me to turn our product into a quadcopter

“Sir, with all due respect, I don’t believe turning a commercial diesel filling station into a quad copter doesn’t seem feasible.”

It tracks with the zoomers. Make it happen.

“Sir, with all due respect, I don’t believe turning a commercial diesel filling station into a quad copter doesn’t seem feasible.”

You just need to think outside the box. like these lads did: https://youtu.be/ReAa2WFm8Vc?t=16

This is the most management-ass “feature” request

The number of times I’ve rejected something because of security flaws (usually database injection), only to see other engineers later approve and merge the pull request is infuriating. There seems to always be an engineer who is willing to make an unsafe product.

Yep, it’s a damn shame, but we’re gonna let them do that because we don’t want to be responsible for deaths or security flaws and ultimately there’s organizations and people out there who value that if our current jobs don’t

That value is instilled in many types of engineering, but not as much in software engineering.

And the people paying the engineers are highly motivated to keep it that way

Ocean gate hasn’t faced any consequences yet

And neither have FAANG companies for the massive social consequences to ubiquitous surveillance

This moral high ground you think you’re standing on doesn’t exist, and won’t until engineers who push back get the support from society to do so. They currently are very much expected to stand up to a corporation on their own, risking their own livelihood, and that’s plain bullshit

Management ALWAYS knows what’s best! Obviously!

Hence why they constantly come running for us to fix it when shit goes as we say it will.

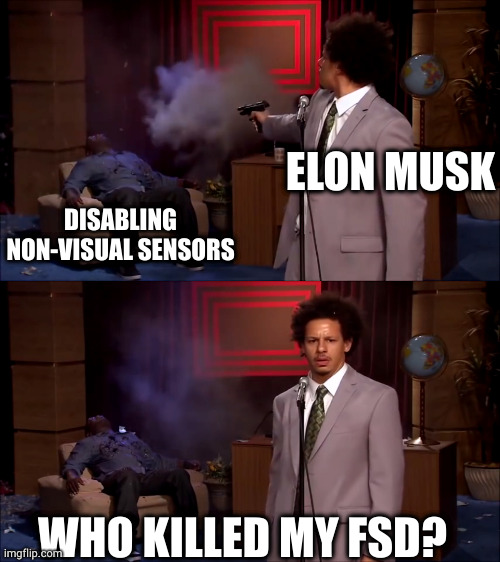

That’s because musk is a dumb narcissistic cocky asshole.

You can only get by on other people’s laurels for so long

tl;dr: Autonomous driving uses a whole host of multiple and different kinds of sensors. Musk said “NO, WE WILL ONLY USE VISION CAMERA SENSORS.” And that doesn’t work.

Guess what? I have eyes; I can see. You know what I want an autonomous vehicle to be able to do? Receive sensory input that I can’t.

We also use way more than just our eyes to navigate. We have accelerometers (ear canals), pressure sensors (touch), Doppler sensors (ears) to augment how we get around. It was a fools errand to try and figure everything out just with cameras.

Also you can alter the vision input by moving your head, blocking the sun with your hand etc.

This seems like a classic case of ego from Musk.

deleted by creator

What’s worse is it will be hard to reverse this decision. Tesla is a data and AI company compiling vision and driving data from drivers around the world. If you change the sensor format or layout dramatically, all the old data and all the new data becomes hard to hybridize. You basically start from scratch at least for the new sensors, and you fail to deliver a promise to old customers.

Sounds to me like they should full steam ahead with new sensors, they will never deliver on what they’ve promised with the tech they are using today.

Old customers situation won’t change and it would only be better going forward.

If you change the sensor format or layout dramatically, all the old data and all the new data becomes hard to hybridize.

I don’t see why that would have to be the case if the new data is a complete superset of the old data. If all the same cameras are there, then the additional sensors and the data those sensors collect can actually help train the processing of the visual-only data, right?

How do we prove we’re not robots? Fucking select the picture with traffic lights or buses, right? How was this allowed.

“Honey, the car ordered itself new tires again!”

He’s such a fucking moron

This news is months old. Honestly agree with musk on this one. We are able to drive with 2(sometimes only 1)low resolution(sometimes out of focus, sometimes closed) cameras on a pivot inside the vehicle with further blindspots all around. Much of our rear situational awareness comes from 2/3 small warped mirrors strategically placed to enhance those 2 low resolution cameras on a pivot. Tesla has already reverted to add some radar back in… The lidar option sounds like dystopia waiting to happen (just imagine all streets filled with aftermarket invisible lasers from 3rd world counties, any one of them could blind you under unlucky circumstances). The best way forward is visual, and if you watch up to date test drives on YouTube you can see they are doing quite well with what they have.

But even if these consequences don’t come to pass, this information still paints Musk’s attitude towards public health and how he views his responsibility to his customers as far from golden.

Why am I not surprised in the least?

It’s the radar, lidar, cameras only story that’s coming up every few months for the last years. A few years ago Tesla went cameras only to save money, assuming it would be good enough. Other manufacturers/cars have a higher certification for autonomous driving but they are also using more sensors than just cameras.

According to the report, Musk overruled a significant number of Tesla engineers who warned him that switching to a visual-only system would be problematic and possibly unsafe due to its high risk of increasing the rate of accidents. His own team knew their systems weren’t up to the task, but Musk believed he knew better than the industry experts who helped propel Tesla to the forefront of autonomous technology and ploughed on with this egocentric, counterproductive plan. He even disabled sensors in older models so that pretty much the entire Tesla fleet went visual-only.

Amazing, just amazing.

He’s starting to sound like Elizabeth Holmes.

Every expert in the field insists that my idea is impossible? They’re backing their assertions with cold, hard facts?! I’ll show em!

if you look back at the history of tesla theres lots of that where musk/tesla engineers actually succeeded. it sounds like that thinking finally bit him in the ass.

These people all start to fail horribly when they stop listening. They somehow convince themselves that they are the genius when their skills stopped at having money and listening to groups of actual experts.

Capitalism. Nothing worse than a CEO for a product to be honest. Being able to overrule engineers and workers is literally the problem with capitalism. A guy with ungodly money vs actual boots on the ground professionals. Disgusting

You mean one man with a sapphire spoon shoved up his ass from birth doesn’t know more than an army of folks that have studied their entire lives, experienced worlds of issues around it, and are living and breathing this stuff everyday for this exact challenge? HUH! Well today I learned! /s

And when the lay offs come, who does it affect more? The billionaire douche bag? Or the people that warned him?

Isn’t an emerald spoon more his thing?

it doesnt end at ceos. i can think of one prominent, and fairly recent, incident where a different automaker knew of a defect before the product launched, and overruled fixing it because it was cheaper to leave it be. and that directly led to people dying. yet gm cars are still sold around the world and most people have forgotten about the ignition incidents. afaik the ceo was never involved in that decision.

I am shocked and surprised.

If it weren’t for all the deaths and other negative impacts for consumers and the general public, I’d be glad this is happening to such an arrogant prick. I hope the DoJ throws the book at him.

I have a Model 3 with FSD. It’s fucking useless.

Sorry about your $14k FSD purchase. Hopefully it gets better

Hubris is having a heck of a month.

Everyone already knew at the time that this decision was doomed to fail. They now even doubled down to actively remove sensors from older models, to avoid the inputs interfering with the new updates. When it comes to automating and especially autonomous driving in combination with safety, one should want as much input as possible. I doubt visual can compute faster than radar/lidar, I think it was just a cost saving effort. Gladly, Mercedes and BMW show the way to autonomous driving and are allowed to actually start using the first versions on European highways.

They now even doubled down to actively remove sensors from older models, to avoid the inputs interfering with the new updates.

Yes, I bought FSD a long time ago and even though I’m owed a hardware 3 upgrade, I’ve yet to get it. If I stay on hardware 2.5, my radar will be stay active and they can’t do something even dumber like disable my parking sensors. I’ve driven vision-only cars and it’s really worse at least for the roads around here. The FSD alpha is still too nerve-wracking to use for me to even consider installing it.

Honestly, Tesla should lean into their recent successes with charger standards and shift to being a company that sells/licenses EV tech to other companies, much as Intel is transitioning from making their own chips to making other people’s. Let GM and Ford and Hyundai and VW whack each other over the head until they haven’t got any margins left, and focus on the aspects of the business that are more profitable than simply making cars.

Hmm. This is too reasonable for Elly the chronic shitposter.

I’m not sure what kind of serious trouble they are actually in. I have spent most of today being driven around by my Tesla, and aside from the occasional badly handled intersection and unnecessary slowdown it’s doing fucking great. So I would Tell anyone who says Tesla is in serious trouble, just go drive the car. Actually use the FSD beta before you say that it’s useless. Because it’s not. It is already far better than anyone expected vision only driving to be, and every release brings more improvements. I’m not saying that is a Tesla fanboy. I’m saying that as a person who actually drives the car.

The thing is working good enough most of the time is not enough. I haven’t driven a Tesla so I’m not speaking for their cars but I work in SLAM and while cameras are great for it, cameras on a fast car need to process fast and get good images. It’s a difficult requirement for camera only, so you will not be able to garante safety like other sensors would. In most scenarios, the situation is simple: e.g. a highway where you can track lines and cars and everything is predictable. The problem is the outliers when it’s suddenly not predictable: a lack of feature in crowded environments, a recognition pipeline that fails because the model detects something is not there or fail to detect something there… then you have no safeguards.

Camera only is not authorize in most logistic operation in factory, im not sure what changes for a car.

It’s ok to build a system that is good « most of the time » if you don’t advertise it as a fully autonomous system, so people stay focus.

My point stands- drive the car.

You’re 100% right with everything you say. It has to work 100% of the time. Good enough most of the time won’t get to L3-5 self driving.Camera only is not authorize in most logistic operation in factory, im not sure what changes for a car.

The question is not the camera, it’s what you do with the data that comes off the camera.

The first few versions of camera-based autopilot sucked. They were notably inferior to their radar-based equivalents- that’s because the cameras were using neural network based image recognition on each camera. So it’d take a picture from one camera, say ‘that looks like a car and it looks like it’s about 20’ away’ and repeat this for each frame from each camera. That sorta worked okay most of the time but it got confused a lot. It would also ignore any image it couldn’t classify, which of course was no good because lots of ‘odd’ things can threaten the car. This setup would never get to L3 quality or reliability. It did tons of stupid shit all the time.What they do now is called occupancy networks. That is, video from ALL cameras is fed into one neural network that understands the geometry of the car and where the cameras are. Using multiple frames of video from multiple cameras at once, it then generates a 3d model of the world around the car and identifies objects in it like what is road and what is curb and sidewalk and other vehicles and pedestrians (and where they are moving and likely to move to), and that data is fed to a planner AI that decides things like where the car should accelerate/brake/turn.

Because the occupancy network is generating a 3d model, you get data that’s equivalent to LiDAR (3d model of space) but with much less cost and complexity. And because you only have one set of sensors, you don’t have to do sensor fusion to resolve discrepancies between different sensors.I drive a Tesla. And I’m telling you from experience- it DOES work. The latest betas of full self driving software are very very good. On the highway, the computer is a better driver than me in most situations. And on local roads- it navigates them near-perfectly, the only thing it sometimes has trouble with is figuring out when is it’s turn in an intersection (you have to push the gas pedal to force it to go).

I’d say it’s easily at L3+ state for highway driving. Not there yet for local roads. But it gets better with every release.

It’s an interesting discussion thanks!

I know that it can be done :). It’s my direct field of research (localization and mapping of autonomous robots with a focus on building 3D model from camera images e.g NeRF related methods )what i was trying to say is that you cannot have high safety using just cameras. But I think we agree there :)

I’ll be curious to know how they handle environment with a clear lack of depth information (highway roads), how they optimized the processing power (estimating depth is one thing but building a continuous 3D model is different), and the image blur when moving at high speed :). Sensor fusion between visual slam and LiDAR is not complex (since the LiDAR provide what you estimate with your neural occupancy grid anyway, what you get is a more accurate measurement) so on the technological side they don’t really gain much, mainly a gain for the cost.

My guess is that they probably still do a lot of feature detection (lines and stuff) in the background and a lot of what you experience when you drive is improvement in depth estimation and feature detection on rgb images? But maybe not I’ll be really interested to read about it more :). Do you have the research paper that the Tesla algo relies on?

Just to be clear, i have no doubt it works :). I have used similar system for mobile robots and I don’t see why it would not. But I’m also worried they it will lull people in a false sense of safety while the driver should stay alert.

Don’t have the paper, my info comes mainly from various interviews with people involved in the thing. Elon of course, Andrej Karpathy is the other (he was in charge of their AI program for some time).

They apparently used to use feature detection and object recognition in RGB images, then gave up on that (as generating coherent RGB images just adds latency and object recognition was too inflexible) and they’re now just going by raw photon count data from the sensor fed directly into the neural nets that generate the 3d model. Once trained this apparently can do some insane stuff like pull edge data out from below the noise floor.

This may be of interest– This is also from 2 years ago, before Tesla switched to occupancy networks everywhere. I’d say that’s a pretty good equivalent of a LiDAR scan…

Because the occupancy network is generating a 3d model, you get data that’s equivalent to LiDAR (3d model of space) but with much less cost and complexity. And because you only have one set of sensors, you don’t have to do sensor fusion to resolve discrepancies between different sensors.

That’s my problem, it is approximating LIDAR but it isn’t the same. I would say multiple sensor types is necessary for exactly the reason you suggested it isn’t - to get multiple forms of input and get consensus, or failing consensus fail-safe.

I don’t doubt Tesla autopilot works well and it certainly seems to be an impressive feat of engineering, but can it be better?

In our town we had a Tesla shoot through red traffic lights near our local school barely missing a child crossing the road. The driver was looking at their lap (presumably their phone). I looked online and apparently autopilot doesn’t work with traffic lights, but FSD does?

It’s not specific to Tesla but people unaware of the limitations level 2, particularly when brands like Tesla give people the impression the car “drives itself” is unethical.

My opinion is if that Tesla had extra sensors, even if the car is only in level 2 mode, it should be able to pick up that something is there and slow/stop. I want the extra sensors to cover the edge cases and give more confidence in the system.

Would you still feel the same about Tesla if your car injured/killed someone or if someone you care about was injured/killed by a Tesla?

IMHO these are not systems that we should be compromising to cut costs or because the CEO is too stubborn. If we can put extra sensors in and it objectively makes it safer why don’t we? Self driving cars are a luxury.

Crazy hypothetical: I wonder how Tesla would cope with someone/something covered in Vantablack?

In our town we had a Tesla shoot through red traffic lights near our local school barely missing a child crossing the road. The driver was looking at their lap (presumably their phone). I looked online and apparently autopilot doesn’t work with traffic lights, but FSD does?

There’s a few versions of this and several generations with different capability. The early Tesla Autopilot had no recognition of stop signs, it was literally just ‘cruise control that keeps you in your lane’. FSD for sure does recognize stop signs, traffic lights, etc and reacts correctly to them. I BELIEVE that the current iteration of Traffic Aware Cruise Control (what you get if you don’t pay extra for FSD or Enhanced Autopilot) will stop for traffic lights but I could be wrong on that. I know it detects pedestrians but its detection isn’t nearly as advanced as FSD.

I will give you that in theory, the time-of-flight data from a LiDAR pulse will give you a more reliable point cloud than anything you’d get from cameras. But I also know Tesla is doing things with cameras that border on black magic. They gave up on getting images out of the cameras and are now just using the raw photon count data from the sensor, and with the AI trained it can apparently detect edges with only a few photons of difference between pixels (below the noise floor). And I can say from experience that a few times I’ve been in blackout rainstorms where even with full wipers I can barely see anything, and the FSD visualization doesn’t skip a beat and it sees other cars before I do.

Would you still feel the same about Tesla if your car injured/killed someone or if someone you care about was injured/killed by a Tesla?

As a Level 2 system, the Tesla is not capable of injuring or killing someone. The driver is responsible for that.

But I’d ask- if a Tesla saw YOUR loved one in the road, and it would have reacted but it wasn’t in FSD mode and the human driver reacted too slowly, how would you feel about that? I say this not to be contrarian, but because we really are approaching the point where the car has better situational awareness than the human.

If we can put extra sensors in and it objectively makes it safer why don’t we? Self driving cars are a luxury.

For the reason above with the loved one. If you can use cameras and make a system that costs the manufacturer $3000/car, and it’s 50 times safer than a human, or use LiDAR and cost the manufacturer $10,000/car, and it’s 100 times safer than a human, which is safer?

The answer is the cameras, because it will be on more cars, thus deliver more overall safety.

I understand the thinking that ‘Elon cheaped out, Tesla FSD is a hack system on shitty hardware that uses clever programming to work around a cut-rate sensor suite’. But I’d also argue- if they can get similar performance out of a camera, and put it on more cars, doesn’t that do more to overall improve safety?In the example above, if the car didn’t have the self driving package because the guy couldn’t afford it, wouldn’t you prefer that a decent but better than human self driving system was on the car?

This kind of serious trouble (from the article):

The Department of Justice is currently investigating Tesla for a series of accidents — some fatal — that occurred while their autonomous software was in use. In the DoJ’s eyes, Tesla’s marketing and communication departments sold their software as a fully autonomous system, which is far from the truth. As a result, some consumers used it as such, resulting in tragedy. The dates of many of these accidents transpired after Tesla went visual-only, meaning these cars were using the allegedly less capable software.

Consequently, Tesla faces severe ramifications if the DoJ finds them guilty.

And of course:

The report even found that Musk rushed the release of FSD (Full Self-Driving) before it was ready and that, according to former Tesla employees, even today, the software isn’t safe for public road use. In fact, a former test operator went on record saying that the company is “nowhere close” to having a finished product.

So even though it seems to work for you, the people who created it don’t seem to think it’s safe enough to use.

My neighborhood has roundabouts. A couple of times when there’s not any traffic around, I’ve let autopilot attempt to navigate them. It works, mostly, but it’s quite unnerving. AP wants to go through them ready faster than I would drive through them myself.

AP or FSD?

AP is old and frankly kinda sucks at a lot of things.

FSD Beta if anything I’ve found is too cautious on such things.

I think you and the author are drawing conclusions that aren’t supported by the quote.

The engineers stated it’s “nowhere close” to being a finished product which is evident by the fact that it’s only L2 and in beta.

The DOJ is investigating but we know some of these crashes where from people disregarding the safety features (like keeping your hands on the wheel and eyes on the road) when they crashed, so what comes of the investigation is still up in the air and I think a lot of the motivation is driven by publicity from articles such as this and not necessarily because the system is unsafe to use at all.

The truth is that nobody has achieved full automation so we don’t know what a full automation suite should look like in terms of hardware and software. The Mercedes system is a joke in that it can only be used on the highway below 40MPH. I dunno what speed limits are where you’re located but in my area all the highways are 55+MPH.

I dunno, I think “even today, the software isn’t safe for public road use” is pretty clear-cut and has nothing to do with the level of automation.

I’m not suggesting anyone else is way ahead though. But I do think that removing all non-visual sensors is an obvious step back, especially in poor weather where visibility may be near zero, but other sensors types could be relatively unimpeded.

I think “even today, the software isn’t safe for public road use” is pretty clear-cut and has nothing to do with the level of automation.

Keep in mind this isnt even a quote and was attributed to someone who doesn’t even work on this tech for the company. What has you convinced it’s unsafe for use now? A few car accidents? What about all the accidents that have been prevented using this same system? You might suggest a ban but if the crash rate or fatality rate increases, haven’t you made conditions less safe on the road?

The problem with articles like this is they focus on things like “Tesla has experienced 50 crashes in the last 5 years!!” but they don’t include context like the fact that cars without these systems have crash rates 10x higher or more. These systems can still be a net benefit even if they don’t work 100% of the time or prevent 100% of crashes.

Musk Overruled Tesla Engineers, And Now They Are In Serious Trouble

The engineers are in serious trouble? Or Tesla?

This headline would be clearer if it followed the convention of companies being singular:

Musk overruled Tesla engineers, and now it’s in serious trouble

Dude! This is the News, you can’t just write a clear and understandable headline, no one will click on the article! Amateurs… (/s if it wasn’t clear)